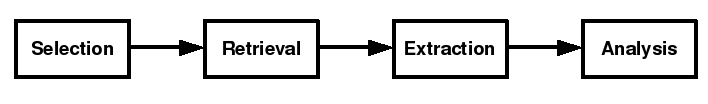

As we have seen before, this figure shows the stages of a typical approach to a post-hoc study of FLOSS, like a digital archaeologist. The figure shows a series of stages, each of which includes some number of steps, and yields some outcomes. Each outcome may or may not feed into the following stage. In this post, I will discuss the selection stage. Remember that this is the method I have preferred so far, and is the method that a number of my peers have used, in whole or in part. It is not the method.

Selection

The point of selection is to choose the metrics that are going to be used to measure the attributes you are interested in, and also to compose a list of projects to study. On selecting metrics, I cannot be especially general. They are very closely tied to the goals of your study and a way needs to be found to come up with an effective way of measuring your success at achieving those goals. My personal preference is the “Goal-Question-Metric” (GQM) Approach. You can read about it elsewhere, but what attracts me to the GQM Approach is that it is a software engineering specific method that helps you come up with the right measures by forming questions needed to achieve the goals you have set. These questions can also “suggest” the hypotheses needed in your study. It is not perfect, but “Goal-Question-Metric” is a useful parallel to “Research Question-Hypotheses-Measures”. I do think it important to do metric selection first; the reason will be apparent in what I shall say next.

Additionally, you will probably want to set some parameters for the investigation, usually to ensure your investigation remains valid. For example, if you looking into some aspect of, say, forum activity then it probably makes no sense to include projects for which no forum activity exists. (At the same time, you should report what proportion of your initial sample is disqualified.) This may impact the pool of projects you can choose from by eliminating some potentials, but it should not impact the metrics you choose — the investigation should be guided by what you want to measure, not how easy something is to measure. Sometimes this is made quite simple for you. For instance, FLOSSMole is a service that provides you with meta-data about individual FLOSS projects in nicely-formatted lists. If you wish to prune such a list then it is easy to write a software tool to do it quickly for you, leaving only the “valid” candidates. Ask nicely and you could borrow mine.

So-called “filters” I have found myself testing for in past work have included:

- Programming language

- Version control system used

- Product size

- Development status

These factors can impact the validity of the study (e.g. can different programming languages be compared fairly), or be technical considerations (e.g. do I have tools that can analyse these programming languages). Both need careful thought.

Further considerations include your selection method, i.e. how are you choosing the projects to study? If you are examining a very small number of projects, be sure your choice has some careful thought behind it. Generalizing from simply analysing one or a couple of projects can be tricky; a more focused comparative analysis, such as the work by Schach et al comparing four different Unix-like operating system kernels is probably more productive at that level. If you seek to generalize FLOSS as a phenomenon from your analysis, some different works have now been carried out (including my own) that do so by analysing large samples of projects. In this case I think the consensus is that random selection of a filtered population of projects is the best approach.

And so the end of the selection process should be a list of projects you wish to analyse that feeds through to the next stage: retrieval.

CSMR 2009 soldiers on.

CSMR 2009 soldiers on. Excellent. The journal publication I co-authored with Andrea Capiluppi and Cornelia Boldyreff (“Identifying exogenous drivers and evolutionary stages in FLOSS projects”) has now been confirmed for publication in the May 2009 edition of Journal of Systems and Software. It is currently available online.

Excellent. The journal publication I co-authored with Andrea Capiluppi and Cornelia Boldyreff (“Identifying exogenous drivers and evolutionary stages in FLOSS projects”) has now been confirmed for publication in the May 2009 edition of Journal of Systems and Software. It is currently available online.